Meta's CEO Mark Zuckerberg met with NVIDIA's Jensen Huang, as both of them performed an iconic "jersey-swap" and probably had a talk about a thing or two on AI.

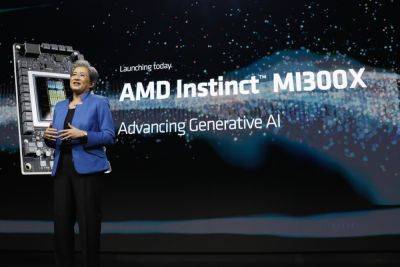

Survey Reveals AI Professionals Considering Switching From NVIDIA To AMD, Cite Instinct MI300X Performance & Cost

09.03.2024 - 22:49 / wccftech.com / Muhammad Zuhair

A recently conducted survey has revealed that a large chunk of AI professionals are looking to switch from NVIDIA to AMD Instinct MI300X GPUs.

AMD's Instinct MI300X May Be The Gateway To The Firm's Entrance In The Ongoing AI "Gold Rush" As It Attracts Huge Interest

Jeff Tatarchuk, who works at TensorWave, recently surveyed 82 engineers and AI professionals, claiming that around 50% of them expressed their confidence in utilizing AMD Instinct MI300X GPU, considering that it offers a better price-to-performance ratio, along with having extensive availability compared to counterparts such as NVIDIA H100s. Apart from that, Jeff also says that TensorWave will employ the MI300X AI accelerators, which is another promising news for Team Red, considering that their Instinct lineup, in general, hasn't seen the adoption level compared to others like NVIDIA.

Related Story AMD Raises FHD Monitor Requirements To 144 Hz For Freesync Tag, 200Hz FHD For Premium Pro

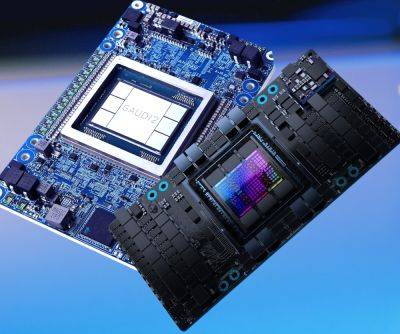

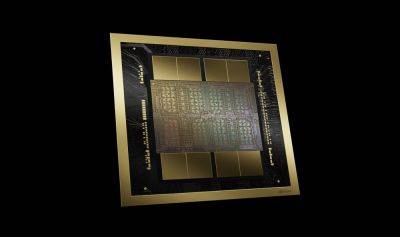

For a quick rundown on the MI300X Instinct AI GPU, it is designed solely on the CDNA 3 architecture, and a lot of stuff is going on. It hosts a mix of 5nm and 6nm IPs, combining to deliver up to 153 Billion transistors (MI300X). Memory is another area where a huge upgrade has been witnessed, with the MI300X boasting 50% more HBM3 capacity than its predecessor, the MI250X (128 GB). Here is how it compares to NVIDIA's H100:

- 2.4X Higher Memory Capacity

- 1.6X Higher Memory Bandwidth

- 1.3X FP8 TFLOPS

- 1.3X FP16 TFLOPS

- Up To 20% Faster Vs H100 (Llama 2 70B) In 1v1 Comparison

- Up To 20% Faster Vs H100 (FlashAttention 2) in 1v1 Comparison

- Up To 40% Faster Vs H100 (Llama 2 70B) in 8v8 Server

- Up To 60% Faster Vs H100 (Bloom 176B) In 8v8 Server

We recently reported how AMD's flagship Instinct AI accelerator has been causing "headaches" for market competitors. This is because not only are the performance gains with the MI300X huge, but AMD has timed its release perfectly, as NVIDIA is currently stuck with the "weight" of order backlogs, which has hindered its growth in gaining new clients. While Team Red didn't get the start they wanted to have, it seems like the upcoming period could prove to be fruitful for the firm, potentially coming head-to-head with market competitors.

News Source: Jeff Tatarchuk

NVIDIA’s GeForce RTX 4060 Price Drops To $279, Getting Close To That “Sweet Perf/$” Spot

NVIDIA's GeForce RTX 4060 GPU is now retailing for as low as $279 US as it approaches its sweet spot positioning from its retail MSRP of $299.

Intel Outlines 40 TOPS NPU Performance As Minimum Requirement For Windows Copilot & AI PC Platforms

Intel has disclosed the compute performance that you would need to run Microsoft's Copilot locally on Windows-based AI PCs.

Qualcomm, Intel, & Google Join Hands To Come For NVIDIA, Plans On Dethroning CUDA Through oneAPI

Qualcomm, Intel, and Google have reportedly formed a new "strategic" coalition in an attempt to dethrone NVIDIA from the AI markets.

Intel & AMD CPUs Blocked By China: No Government PC To Use Chips From US Companies

The Chinese government has blocked the use of Intel and AMD CPUs in government computers, creating new hostilities in the tech industry.

AMD Radeon RX 7900 GRE GPU Memory Overclocking Now Supported In Latest Drivers, +15% Performance Uplift

AMD has removed the memory OC limits from its Radeon RX 7900 GRE GPU in the latest driver release, allowing for some impressive performance uplifts.

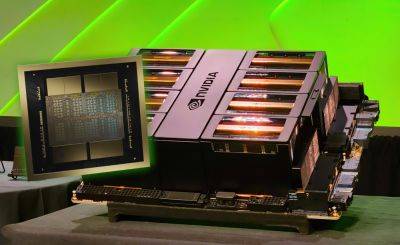

NVIDIA Blackwell AI GPUs Won’t Face Order Backlogs, Supply Chain Drastically Improved

NVIDIA is expecting a steady CoWoS packaging supply for its Blackwell AI GPUs as its CEO sees optimism in the supply chain.

NVIDIA’s Next-Gen RTX & AI GPU IP Incorporated Within Mediatek’s Upcoming Dimensity SOCs

MediaTek might be the first company to confirm the use of NVIDIA's next-gen RTX & AI GPU IP for its upcoming Dimensity SOCs for automobiles.

TinyCorp Ditches AMD For Its TinyBox AI Package, Opts For NVIDIA or Intel Options

TinyCorp, the company that has recently made headlines due to its AI venture, has ultimately decided to part ways with AMD due to the firmware constraints they are witnessing and now utilizing NVIDIA and Intel hardware.

NVIDIA Blackwell GPUs Cost Around $30K-$40K, $10 Billion Development Cost For The Fastest AI Chip On The Planet

NVIDIA's Blackwell AI GPUs unveiled at GTC 2024, will cost a hefty price for potential buyers, as the firm is estimated to have poured several billion dollars into the project.

AMD To Ship Huge Quantities Of Instinct MI300X Accelerators, Capturing 7% of AI Market

AMD's "gold rush" time in the AI markets might finally come, as industry reports that the firm faces massive demand for its cutting-edge MI300X AI accelerators.

DRAM Cache For GPUs Improves Performance By Up To 12.5x While Significantly Reducing Power Versus HBM

A new research paper has discovered the usefulness of DRAM cache for GPUs which can help enable higher performance at low power.