As many as two asteroids passed Earth yesterday in close proximity. One of them was nearly 120 feet wide, which is nearly the size of an aircraft! Despite their close approaches, these asteroids did not pose a threat to Earth and had no chance of impact. But how do these space rocks come close to the planet? NASA says this happens due to the interaction with a large planet's gravitational field, which can send the tumbling towards a planet, raising a potential impact scenario. With the help of its advanced tech, the US Space Agency has shed light on another asteroid that is set to pass Earth today, February 29.

We need to know how AI firms fight deepfakes

12.02.2024 - 13:09 / tech.hindustantimes.com / Ai

When people fret about artificial intelligence, it's not just due to what they see in the future but what they remember from the past -- notably the toxic effects of social media. For years, misinformation and hate speech evaded Facebook and Twitter's policing systems and spread around the globe. Now deepfakes are infiltrating those same platforms, and while Facebook is still responsible for how bad stuff gets distributed, the AI companies making them have a clean-up role too. Unfortunately, just like the social media firms before them, they're carrying out that work behind closed doors.

I reached out to a dozen generative AI firms whose tools could generate photorealistic images, videos, text and voices, to ask how they made sure that their users complied with their rules.(1) Ten replied, all confirming that they used software to monitor what their users churned out, and most said they had humans checking those systems too. Hardly any agreed to reveal how many humans were tasked with overseeing those systems.

And why should they? Unlike other industries like pharmaceuticals, autos and food, AI companies have no regulatory obligation to divulge the details of their safety practices. They, like social media firms, can be as mysterious about that work as they want, and that will likely remain the case for years to come. Europe's upcoming AI Act has touted “transparency requirements,” but it's unclear if it will force AI firms to have their safety practices audited in the same way that car manufacturers and foodmakers do.

For those other industries, it took decades to adopt strict safety standards. But the world can't afford for AI tools to have free rein for that long when they're evolving so rapidly. Midjourney recently updated its software to generate images that were so photorealistic they could show the skin pores and fine lines of politicians. At the start of a huge election year when close to half the world will go the polls, a gaping, regulatory vacuum means AI-generated content could have a devastating impact on democracy, women's rights, the creative arts and more.

Here are some ways to address the problem. One is to push AI companies to be more transparent about their safety practices, which starts with asking questions. When I reached out to OpenAI, Microsoft, Midjourney and others, I made the questions simple: how do you enforce your rules using software and humans, and how many humans do that work?

Most were willing to share several paragraphs of detail about their processes for preventing misuse (albeit in vague public-relations speak). OpenAI for instance, had two teams of people helping to retrain their AI models to make them safer or react to harmful outputs. The company behind controversial

'AI godfather', others urge more deepfake regulation in open letter

Artificial intelligence experts and industry executives, including one of the technology's trailblazers Yoshua Bengio, have signed an open letter calling for more regulation around the creation of deepfakes, citing potential risks to society.

Google unveils Gemma, a family of AI models for open-source developers; Know all about it

Google has announced its latest innovation in artificial intelligence (AI) called Gemma on the heels of the launch of Gemini Pro 1.5 Pro a few days ago. Developed by Google DeepMind, It is a family of lightweight and open AI models that Google says Gemma is built from the same research and technology that was used to create Gemini.

5 things about AI you may have missed today: Samsung to provide AI training, Truecaller eyes AI to fight fraud, more

AI roundup: Samsung launched a new program at the Visvesvaraya Technological University that will provide upskilling and training opportunities to over 1100 students about AI and IoT to make them future-ready. For growth and to combat fraud, Truecaller CEO said the company is turning to AI. Know what happened in the world of AI today.

GTA 6 leak hints at AI-powered NPCs leading to smarter interactions; Know what’s coming

Every week, a new GTA 6 leak surfaces revealing exciting details about it. The next Grand Theft Auto game, which is confirmed to be released in 2025, will likely debut with groundbreaking features that take the gameplay experience to the next level. With the GTA 6 trailer, we've already seen the likely open-world, characters, locations and some gameplay elements. One of the more interesting leaks suggests at the possibility of smarter NPCs in GTA 6 which harness the power of artificial intelligence (AI).

Crackdown on deepfakes: MCA, Meta partner to curb AI-generated misinformation with WhatsApp helpline

The Misinformation Combat Alliance (MCA) and Meta have announced that a dedicated fact-checking helpline on WhatsApp - aimed at combating deepfakes and deceptive AI-generated content - will be available for the public in March 2024.

Visme AI-powered presentation tool: Know how to create stunning visual designs effortlessly

Presentations are now a permanent feature in all corporate settings and they have even infiltrated into areas that were once considered anathema for them - visual elements, art and designs. Do you know why making a visually pleasing presentation is necessary? An informative piece of documentation should not always include texts and graphs as it makes the presentation look boring. To make them engaging one must include different types of visual elements such as pictures, videos, animations, icons, illustrations and more. This enables the speaker to draw more attention to the details and keep everyone hooked throughout the presentation. While there are multiple presentation tools available in the market, there is one tool called “Visme” that shines in providing the best presentation editing tools and techniques. Know more about the Visme presentation tool here.

How iOS 18’s Forthcoming AI Features Could Make The iPhone 16 a Worthy Upgrade Over The iPhone 15

Apple is invested in bringing AI technology to its product line, with the iPhone being the prime candidate to receive many new additions. Apple's process to bring AI to the iPhone is two-fold. While some features may be part of iOS 18, others will be exclusive to the iPhone 16 lineup. Altogether, AI will make the iPhone 16 and iPhone 16 Pro models a worthy upgrade over the current models, considering the number of new features coming with iOS 18.

One Tech Tip: Ready to go beyond Google? Here's how to use new generative AI search sites

It's not just you. A lot people think Google searches are getting worse. And the rise of generative AI chatbots is giving people new and different ways to look up information.

Make messaging fun! Know how to create GIFs on iPhone without third-party apps

In the colourful world of instant messaging, emojis and GIFs have grown to be imperative tools for expressing emotions and adding flair to conversations. However, what takes place when you cannot find the appropriate GIF to carry your message? Fear not, as we unveil a simple yet powerful technique to create your very own GIFs on iPhone, without the need for third-party apps.

AI smartphone without any apps! Know what Brain.ai and Deutsche Telekom are bringing

In some exciting yet confusing news, Deutsche Telekom in collaboration with Brain.ai will introduce an AI smartphone which will not contain any mobile apps. Yes, you read it right! A smartphone without apps. Now, you must be thinking about how the smartphone will function? Well, it will consist of a digital assistant powered by AI which will conduct all the app tasks from booking flight tickets to creating a day-to-day itinerary. The AI smartphone is likely to be launched at the Mobile World Congress 2024. Learn more about the AI phone here.

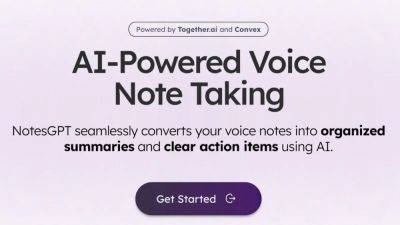

AI-powered NotesGPT turns your voice notes into organized summaries; Know how to use it

Have you ever recorded voice notes in a meeting with the aim of turning it into text later only to realise it is quite an arduous task? You're not alone. While there are a lot of transcription apps in the market, none of them actually function the way we want them to. However, that has changed with the recent boom in artificial intelligence (AI). Nowadays, there is a vast range of AI apps that help you accomplish any and all tasks. One such app is NotesGPT, which not only converts your voice notes into text but also creates organized summaries of it. Know all about it.