Starfield offers a vast playspace to explore and interact with, and whilst it’s mostly locked behind loading screens, it still ends up being a wonderfully engrossing experience. Bethesda games have always allowed players to play a more villainous character, and what better way to do that than being a ship-stealing space pirate?

Alexa, What’s Your Future? Perhaps Finally Making Money

24.09.2023 - 01:11 / tech.hindustantimes.com

When Amazon.com Inc. executive Dave Limp announced in August that he would leave by the end of this year, I wrote that he would depart without having satisfactorily answered the question of what Alexa was for and why the gadget-buying public truly needed the voice-assistant technology.

Amazon seemed be having similar queries — Limp's unit, reported to have been burning through $5 billion a year, was hit harshly by recent cutbacks at the company. Meanwhile, the explosion of AI, kick-started by the launch of ChatGPT, has drastically redefined what a digital assistant could be expected to do. Alexa looked comparatively stupid: ChatGPT users were writing essays; Alexa users were setting egg timers.

OpenAI's groundbreaking tool sent shockwaves through Silicon Valley, in no small part because it wrong-footed bigger tech companies that hadn't yet been able to put generative AI capabilities directly into the hands of consumers.

The shifting sands — ChatGPT,Amazon cutbacks, Limp's departure — have raised the question of where Amazon's devices unit goes from here. Limp's swan-song keynote this week, held at the company's new “HQ2” campus in Arlington, Virginia, attempted to show Alexa could close the gap on AI.

Crucially, the new phase of Alexa could mean a new business model in which users pay an additional monthly fee for a more sophisticated virtual assistant.

Will Alexa finally be able to make money for Amazon? In a nod to the question-and-answer relationship users have with Alexa, below is a conversation I had with Limp after his keynote. He's a chatter — it's edited for brevity and clarity. I'll jump in with my thoughts as we go.

Dave Lee: Last time we spoke, you were talking about ambient computing. Now you're instead calling it ambient “intelligence.” You mentioned “generative AI” within about 30 seconds of the keynote starting. ChatGPT has obviously had an impact. How has Amazon changed what it is doing?

Dave Limp, Amazon: Well, there's no way we could have shown you what we showed you [on Wednesday], and what we're going to be shipping before the end of the year, if we had started it when ChatGPT was announced. It's just impossible to do what you've seen us do in that period of time. For the past couple years in the background, we've been using generative AI [for building Alexa features]. But what has become pretty clear, at least to me and the team, is that as you feed these models more data, they get larger, they get better. And they haven't stopped getting better. The real hard work is fine-tuning that model for your use case. In the home, the use case is very different than on your phone or in your browser. And that's what we're spending the most time on now.

His argument is that Alexa offers a real-world

Nintendo's Weirdest 3DS Game May Finally Be Back From The Dead

Trademark registrations filed at the end of September 2023 suggest the upcoming return of. An eccentric, Mii-centric social simulator originally released for the 3DS, is a truly unique game in its genre. However, despite its success, remained stagnant on the 3DS for its entire lifespan. There's never been word of a follow-up or port, but new information suggests that could be subject to change.

I.Q. Final finally arrives in North America as part of the PlayStation Classics Catalog

Sony has announced what games are being added to their various PlayStation Plus tiers this month, and buried with the rest of their Classics Catalog additions is I.Q. Final. It will be arriving on October 17.

Don't waste your money on the Seagate Expansion Card, the WD Black C50 is even cheaper

With any big sales event comes a myriad of digital storage deals. Us gamers are spoiled for choice this Prime Day with plenty of SSDs and portable hard drives to choose from.

Make Way….

Dundee, Scotland – 09 October 2023: Chaotic multiplayer “DIY racer” Make Way, from indie developer Ice Beam Games and publisher Secret Mode, is turbo-charging into Steam Next Fest today. Supporting 1-4 players, the Make Way demo gives players an early peek at the unbridled chaos expected in the full, cross-platform game for PC, Nintendo Switch, PS5, PS4, Xbox Series X|S, and Xbox One later this year.

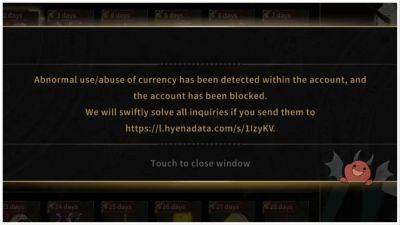

What in Hell is Bad Banning Drama Makes the Game Title Kind of Ironic

The latest What in Hell is Bad banning drama hasn’t got anything to do with the lewdness of the game – it’s because players are receiving bans for no reason. Plus, with multiple maintenance periods being put in place since the game’s launch, it’s not a good look for PrettyBusy.

You can finally change your name in Baldur’s Gate 3

A funny story about me: I did not know that “Tav” was the default name for the player character in Baldur’s Gate 3. I thought, like in other role-playing games I’ve played, it was a randomly generated name. “Tav,” I thought when I saw it, “not bad for a random name generator.” Then I found out lots of other people have Tavs, and learned the truth: I wasn’t impressed by a random selection; I had settled for vanilla. Mortifying, honestly.

One of the best RTS games ever made is finally making a comeback

Command and Conquer. Ages of Empires. Company of Heroes. The RTS is one of PC gaming’s defining genres, delivering some of the greatest experiences to ever grace our beloved gaming rigs. But between strategy sims, city builders, 4X games, and more, it feels like the RTS has fallen a little out of favor in recent years. Thank the giant, hovering mouse cursor in the sky then that one of the best and most beloved real-time strategy series ever is finally making a comeback. Commandos Origins, a long-awaited prequel to Commandos, Commandos 2, and Commandos 3, is coming to Steam.

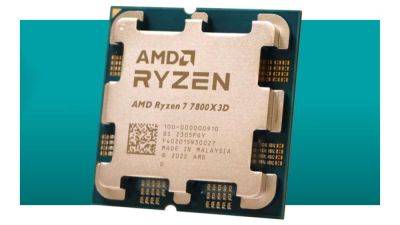

AMD's best gaming CPU is back on sale again, making a pitch to be the heart of your next gaming PC

AMD Ryzen 7 7800X3D | 8 cores | 16 threads | 5.0GHz | AM5 Socket | 96MB L3 cache | 120W TDP | $449.00 $399.00 at Newegg (save $50)The 7800X3D is pretty much one of the very best, if not the best CPU you can get for a pure gaming PC. Its performance is only matched by Intel's Core i9-13900K but that's a lot more expensive and uses more power. It's not as well rounded as the 13900K, so gamers also wanting strong productivity chops might want to consider something else. But if you're building a new PC for nothing but gaming, this is the one to get.

Space authenticity be damned, this Starfield mod plates your delectable food and makes me a lil hungry

I'm a sucker for good-looking video game food, which is why I was instantly drawn to a new Starfield mod that puts all of your culinary creations on dinner plates.

"Thanks for the memories": Overwatch League staff share emotional message after what might be the competition's final games

Overwatch League host Soe Gschwind ended coverage of the 2023 season with an emotional message as the future of the competition remains uncertain.

Cyberpunk 2077: Phantom Liberty finally makes me feel like the cyber ninja I always wanted to be

Cyberpunk 2077: Phantom Liberty is here with the 2.0 update, reinventing the game’s progression again. The new skill trees present are Cyberpunk 2077‘s second overhaul after the 1.5 update initially changed them.