The M4 iPad Pro's OLED display is potentially the best one on a tablet, as it uses a dual stack of OLED sheets for enhanced brightness and color accuracy. The company also introduced a brand-new 13-inch variant of the iPad Air, which comes with an M2 chip for Pro-level performance. A YouTuber has now discovered that the new OLED iPad Pro and iPad Air models come with stronger magnets than before despite a thinner overall design and form factor.

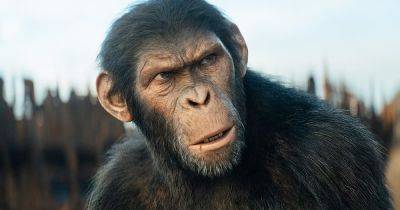

Kingdom of the Planet of the Apes’ VFX lead argues that the movie uses AI ethically

10.05.2024 - 14:13 / polygon.com / Josh Brolin

Right now, every industry faces discussions about how artificial intelligence might help or hinder work. In movies, creators are concerned that their work might be stolen to train AI replacements, their future jobs might be taken by machines, or even that the entire process of filmmaking could become fully automated, removing the need for everything from directors to actors to everybody behind the scenes.

But “AI” is far more complicated than ChatGPT and Sora, the kinds of publicly accessible tools that crop up on social media. For visual effects artists, like those at Wētā FX who worked on Kingdom of the Planet of the Apes, machine learning can be just another powerful tool in an artistic arsenal, used to make movies bigger and better-looking than before. Kingdom visual effects supervisor Erik Winquist sat down with Polygon ahead of the movie’s release and discussed the ways AI tools were key to making the movie, and how the limitations on those tools still make the human element key to the process.

For the making ofKingdom of the Planet of the Apes, Winquist says some of the most important machine-learning tools were called “solvers.”

“A solver, essentially, is just taking a bunch of data — whether that’s the dots on an actor’s face [or] on their mocap suit — and running an algorithm,” Winquist explains. “[It’s] trying to find the least amount of error, essentially trying to match up where those points are in 3D space, to a joint on the actor’s body, their puppet’s body, let’s say. Or in the case of a simulation, a solver is essentially taking where every single point — in the water sim, say — was in the previous frame, looking at its velocity, and saying, ‘Oh, therefore it should be here [in the next frame],’ and applying physics every step of the way.”

For the faces of Kingdom’s many ape characters, Winquist says the solvers might manipulate digital ape models to roughly match the actors’ mouth shapes and lip-synching, giving the faces the vague creases and wrinkles you might expect to form with each word. (Winquist says Wētā originally developed this technology to map Josh Brolin’s Thanos performance onto a digital model in the Avengers movies.) After a solver works its magic, the Wētā artists get to work on the hard part: taking the images the solver started, and polishing them so they look perfect. This is, for Winquist, where the real artistry comes in.

“It meant that our facial animators can use it as a stepping-stone, essentially, or a trampoline,” Winquist explains with a laugh. “So [they can] spend their time really polishing and looking for any places where the solver was doing something on an ape face that didn’t really convey what the actor was doing.”

Instead of having to

Zelda: Tears of the Kingdom players chase the elemental dragons after one player discovers a different Triforce on their backs

The Legend of Zelda: Tears of the Kingdom players have been spurred into investigating the game's three elemental dragons, after one player discovered they're hiding a neat little Triforce design on their backs.

Lord of the Rings: The Hunt for Gollum Story Details Teased, Won’t Be 4th Movie in the Trilogy

won’t be “just the fourth film in the [original] trilogy” of Lord of the Rings movies.

Benchmark Shows That Pixel 8a Uses A Slower Tensor G3 Compared To The One Found In The Pixel 8 And Pixel 8 Pro

The recently released Pixel 8a is one of the best phones on the market for those who are looking for a device that is affordable but also packs a punch. There are several reasons to choose this device, like the fact that it is more affordable than its elder siblings while maintaining most of what makes the Pixel 8 and Pixel 8 Pro so good. However, benchmarks of the newly released budget Pixel tell a slightly different story.

Planet of the Apes Reboot Movies Ranked After Kingdom

Planet of the Apes is one of those franchises that won’t go away. The first entry hit theaters like an atomic bomb in 1968 and spawned four sequels, two television series, and a remakesteered by director Tim Burton in 2001. More recently, the Planet of the Apes reboot movies kicked off with 2011’s successful Rise of the Planet of the Apes, which paved the way for Matt Reeves’ critical and commercial smash Dawn of the Planet of the Apes in 2014 and War for the Planet of the Apes in 2017.

Netflix’s Bridgerton season 3A, X-Men ’97’s finale, and more new TV this week

There’s a lot of TV to weed through in any week, and this week is no exception — something I’m sure I’ve said about plenty of packed TV weeks, but what’re you gonna do! The television giveth, and giveth, and taketh away, and giveth some more.

Box Office Results: All Hail Kingdom of the Planet of the Apes

20th Century Fox’s Kingdom of the Planet of the Apes went bananas this weekend, raking in a reported $55-56M per Deadline. Strong previews and a $20 million Saturday propelled the sci-fi adventure to the top of the charts, while a $72.5M international haul pushed its global tally to $129M ($13.2M from Imax).

Kingdom of the Planet of the Apes’ posters and trailers all contain a huge spoiler

Spoiler warnings have become a polite way to signal to the internet that you’re about to discuss some aspect of a movie they might prefer not to know before seeing the film. People online have argued endlessly about what constitutes a spoiler and what needs a warning. But the question gets more complicated when a studio’s marketing for a movie is handing out the spoilers.

Play the Planet of the Apes game that time forgot after watching the new movie

Kingdom of the Planet of the Apes hits theaters this weekend. If you were hoping to find ways to spend more time in that world through video games, your options are surprisingly slim. Your only easy option is Planet of the Apes: Last Frontier. This is a game I’ve seen almost no one talk about since it was released, but it’s a fascinating title that warrants a revisit.

Kingdom of the Planet of the Apes Producers Have Vision for 5 More Movies

is the fourth entry in the new Planet of the Apes franchise. If the team behind it have their say, there will be many more to come.

Former Helldivers 2 lead writer praises the shooter's community for uniting against the PSN mandate: "We trained them to fight together. And then they fought together"

A former Helldivers 2 lead writer has praised the shooter's community for uniting against the recent PSN mandate.

Diablo 4 lead says "obvious" bad ideas used to "fall through the cracks" because the devs didn't fully realize "doing this 2,000 times is actually terrible"

Diablo 4 lead class designer Adam Jackson says some features that were widely maligned simply didn't go through enough testing for Blizzard to realize they were tedious in design.