NVIDIA has reportedly finalized a deal with TSMC to utilize its 3nm process node for the creation of its next-gen Blackwell B100 GPUs for AI.

NVIDIA TensorRT-LLM Boosts Large Language Models Immensely, Up To 8x Gain on Hopper GPUs

08.09.2023 - 17:25 / wccftech.com

NVIDIA is announcing a brand new AI software stack today known as TensorRT LLM which boosts Large Language Models performance across its GPUs.

NVIDIA's TensorRT-LLM is announced as a highly optimized, open-source library that enables the fastest inferencing performance across all Large Language Models with NVIDIA's AI GPUs such as Hopper. NVIDIA has worked with all LLMs within the open-source community to optimize its GPUs by utilizing the latest AI kernels with cutting-edge techniques such as SmoothQuant, FlashAttention & fMHA. The open-source foundation includes ready-to-run SOTA inference-optimized versions of LLMs such as GPT-3 (175B), Llama Falcom (180B), & Bloom, just to name a few.

TensorRT-LLM is also optimized to do automatic parallelization across multiple NVLINK servers with Infiniband interconnect. Previously, servers had to be manually assigned a large language model across multiple servers/GPUs which shouldn't be the case anymore with Tensor-RT LLM.

One of the biggest updates that TensorRT-LLM brings is in the form of a new scheduler known as In-Flight batching which allows work to enter and exit the GPU independent of other tasks. It allows dynamic processing of several smaller queries while processing large compute-intensive requests in the same GPU. This whole process makes the GPU more efficient and leads to some huge gains in throughput on GPUs such as the H100, up to 2x to be exact.

The TensorRT-LLM stack is also optimized around Hopper's Transformer engine and its compute FP8 capabilities. The library offers automatic FP8 conversion, a DL compiler for kernel fusion, & a mixed precision optimizer along with support for NVIDIA's own Smoothquaint algorithm enabling 8-bit quantization performance without accuracy loss.

So coming to the performance figures, NVIDIA compares the A100 with the H100's performance in August and the H100's performance with TensorRT-LLM. In GPT-J 6B (Inference), the H100 already offered a 4x gain but with TensorRT-LLM, the company doubles the performance which leads to an 8x gain in this specific test. In Llama2, we see up to a 5x gain with TensorRT LLM and almost a 2x gain over the standard H100 without TensorRT-LLM.

NVIDIA states that they are working with all leading inference workloads such as Meta, Grammarly, Deci, anyscale, etc. to accelerate their LLMs using TensorRT-LLM. As for availability, TensorRT-LLM is available in early access now with a full release expected next month. As for support, TensorRT-LLM will be supported by all NVIDIA Data Center & AI GPUs that are in production today such as A100, H100, L4, L40, L40S, HGX, Grace Hopper, and so on.

Wake up, Samurai—Cyberpunk 2077: Phantom Liberty Nvidia GPU driver just dropped

It's nearly time to play Cyberpunk 2077: Phantom Liberty. And would you believe it's an absolute scorcher of a game expansion. Before that happens, however, there's still time to jump into the free 2.0 update to get your V ready for the new expansion and don't forget to download the latest Nvidia Game Ready drivers out today.

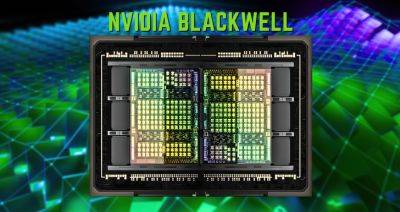

Nvidia's next-gen Blackwell GPUs rumored to be chiplet-based

Known Nvidia leaker kopite7kimi—who originally revealed the name of the company's next GPU architecture a few years ago—says Nvidia's next-gen data center GB100 Blackwell series graphics cards will have a similar core count as Ada Lovelace chips but will have «significant changes in its unit structure.»

NVIDIA’s Next-Gen Blackwell GB100 GPUs Utilize Chiplet Design, Feature Significant Changes

NVIDIA's next-gen Blackwell GB100 GPUs for HPC & AI customers are rumored to go fully onboard with a chiplet design according to Kopite7kimi.

Nvidia Cash Geyser Can Cover Buybacks and Vital R&D

Investors fretting that Nvidia Corp.'s massive stock buyback allocation would leave it short of funds for vital research and development should take heart from the chipmaker's swelling free cash flow.

NVIDIA Reportedly Shipping 900 Tons of H100 AI GPUs This Quarter, Amounts to 300,000 Units

NVIDIA's H100 AI GPUs have been the new talk in the industry, topping all charts when it comes to record sales. The research firm Omdia has disclosed that NVIDIA shipped 900 tons of H100s in Q2 2023, revealing a really interesting figure.

NVIDIA GeForce RTX 4070 12 GB GPUs Are Now Available For $549 US

NVIDIA's GeForce RTX 4070 GPUs are now available at a starting price of $549 US at leading online retailers such as Newegg.

Latest Nvidia driver improves Starfield performance on RTX 30 and 40 series

A few months before release, it was revealed that Bethesda would be partnering with AMD for Starfield, likely leaving Nvidia and Intel users worried. Now, Team Green has pushed out a new driver update that is said to boost the game by an average of 5%.

Latest Nvidia driver improves Starfield performance for PCs with Resizable BAR

It’s no secret that Starfield requires a powerful gaming PC. Even if you have the latest generation hardware and the best graphics settings, the game will still crush your framerate. PC optimizations are on the way at last though for Nvidia users, because the latest game drivers improve Starfield performance by a small margin on systems that support Resizable BAR.

Nvidia boosts Starfield performance with GPU driver update

By Tom Warren, a senior editor covering Microsoft, PC gaming, console, and tech. He founded WinRumors, a site dedicated to Microsoft news, before joining The Verge in 2012.

Intel Gaudi2 Accelerator MLPerf Benchmarks Show A Viable AI Alternative To NVIDIA’s GPUs

Intel has released updated MLPerf benchmarks of its Gaudi2 accelerators, which have been the talk of the town recently. The data obtained proves that the Gaudi AI accelerators are framing out to be a viable alternative to NVIDIA's H100 GPUs, allowing Intel to get its share of the "AI hype".

NVIDIA Posts Big AI Numbers In MLPerf Inference v3.1 Benchmarks With Hopper H100, GH200 Superchips & L4 GPUs

NVIDIA has released its official MLPerf Inference v3.1 performance benchmarks running on the world's fastest AI GPUs such as Hopper H100, GH200 & L4.